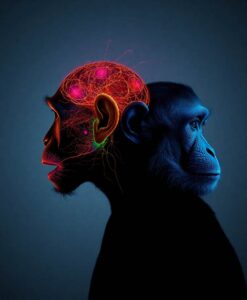

The emerging picture suggests a set of neural pathways that operate in parallel, rather than a single route that does everything. Comparing results across species highlights patterns that repeat in macaques and humans, which helps explain how these systems might have evolved. Computational work complements the biological data by proposing ways the brain reduces messy acoustic inputs into simpler internal codes that represent who is speaking and what they mean.

Understanding voice processing matters for learning, social development, and inclusive design. If we can trace how the brain builds reliable voice representations, that knowledge could inform better hearing aids, more natural speech technology, and therapies for people who struggle with social communication. Follow the full article to see how these discoveries connect to human potential and where researchers are pushing next to fill in the remaining gaps.

Voices are among the most socially informative sounds in our auditory landscape, but only recently has a coherent picture of the neural machinery supporting their perception begun to emerge. New evidence from intracranial recordings, fMRI, and comparative primate research reveals specialized voice patches and neural pathways for rapid parallel extraction of identity, emotion, and social cues. These findings point to an evolutionarily conserved system with striking parallels to face processing. Emerging computational models further show how the brain may transform complex acoustic signals into abstract, low-dimensional representations. These advances raise key outstanding questions about the organisation, causal role, and computational principles of voice-selective circuits across primates.