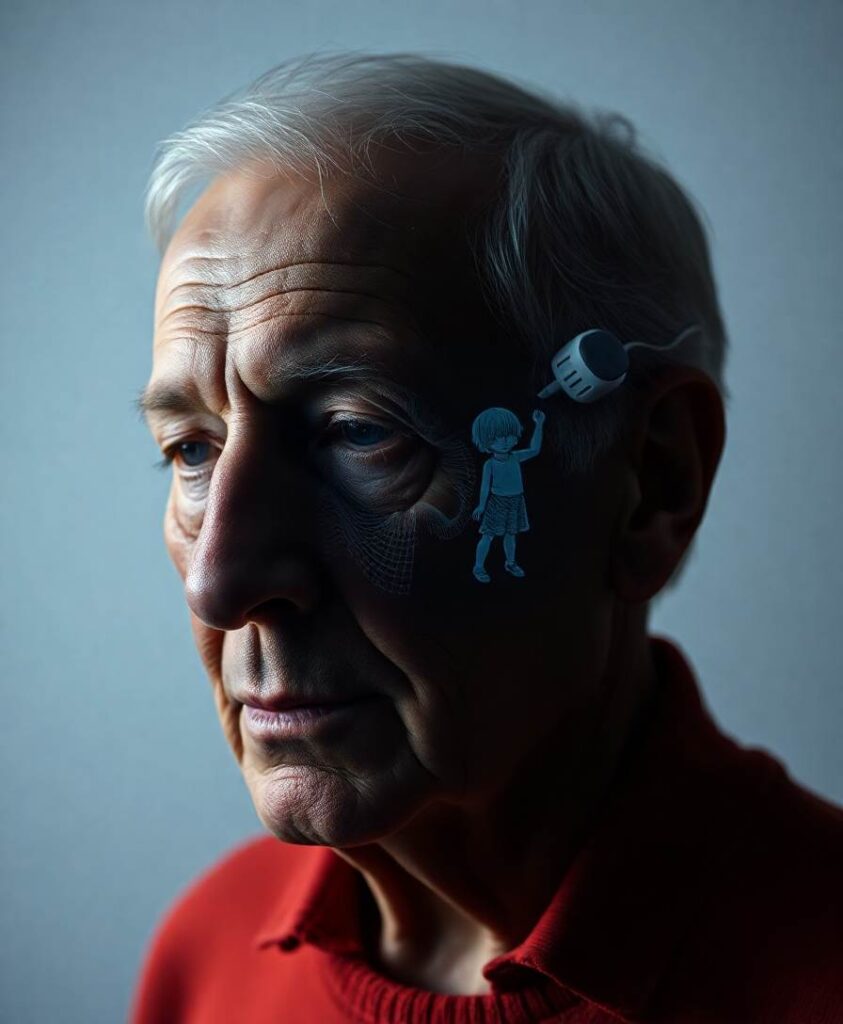

When technology steps into the role of offering empathic responses, the signal shifts. Automated empathy can feel less costly, less anchored in personal history, and therefore less reliable as a predictor of future commitment. That change affects how we value emotional exchanges and may reshape the kinds of relationships we form, especially in environments where AI-mediated communication becomes common.

This topic matters for anyone thinking about human potential, inclusion, or the design of social technologies. If empathy serves as a credential for future care, then widespread use of AI to produce empathic messages could alter who gains access to real support. Follow the research to explore how designers, educators, and policymakers might preserve trustworthy social signals while using AI to widen access to emotional resources.

People devalue empathic responses believed to be edited by artificial intelligence (AI). I propose that this reflects the role of empathy as a predictive social signal: genuine human empathy updates expectations about closeness and future commitment, a function that is weakened when empathy becomes cheap, automated, or outsourced.