Translating these human traits into machine behavior is a technical and ethical challenge. Machines today excel at object-level tasks that fit clear rules and lots of data. They falter when a problem requires stepping back, questioning assumptions, or weighing social consequences. Building wise machines means designing AI that monitors its own reasoning, recognizes uncertainty, and adjusts its goals to align with human values. That shift would make systems more reliable in new situations, easier for people to understand, and better partners in collaborative settings.

Exploring how to measure and train metacognitive skills in AI connects directly to human potential. If systems can learn when to ask for help, explain their limits, and account for diverse perspectives, they could amplify strengths without amplifying harm. The article outlines benchmarks and training ideas that point toward safer, more inclusive tools. For readers curious about practical next steps and the trade-offs involved, the full piece shows how metacognition could reshape AI from clever to wise.

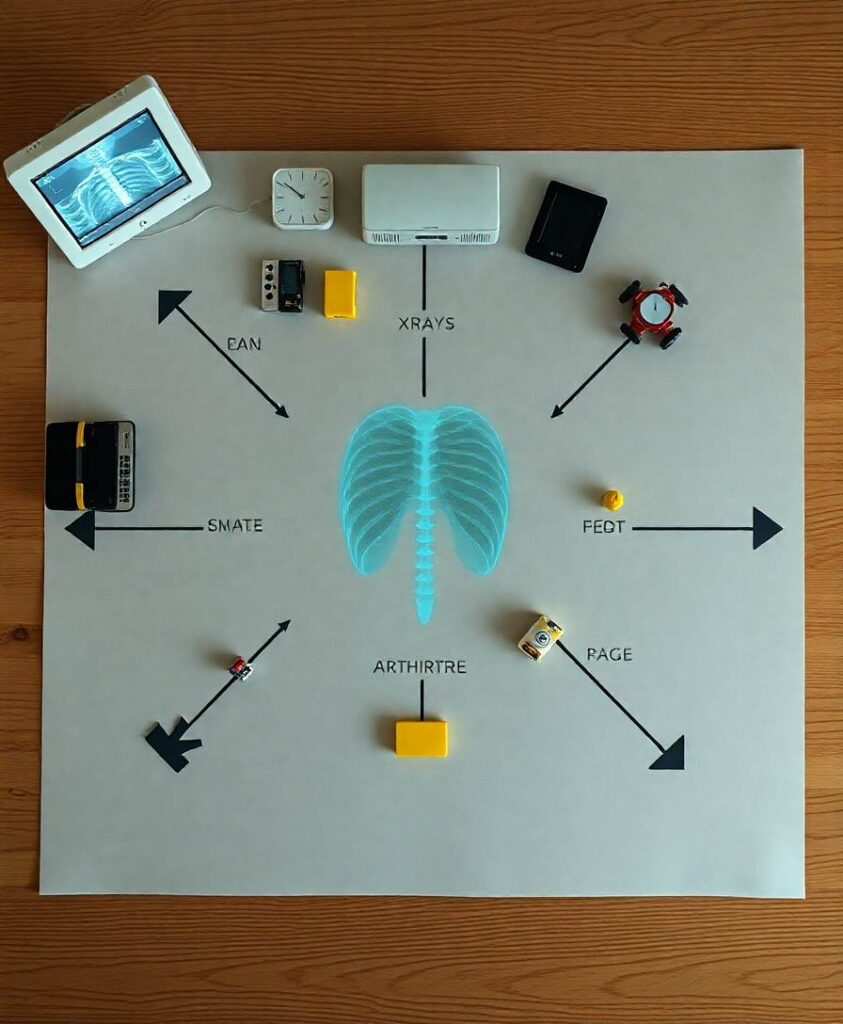

Although artificial intelligence (AI) has become increasingly smart, its wisdom has not kept pace. In this opinion article, we examine what is known about human wisdom and sketch a vision of its AI counterpart. We introduce human wisdom as strategies for solving intractable problems—those outside the scope of analytic techniques—including both ‘object-level’ strategies, such as heuristics (for managing problems), and ‘metacognitive’ strategies, such as intellectual humility, perspective-taking, or context adaptability (for managing object-level task fit). We argue that AI systems particularly struggle with this type of metacognition. Wise metacognition would lead to AI that is more robust to novel environments, explainable to users, cooperative with others, and safer by risking fewer misaligned goals with human users. We discuss how wise AI might be benchmarked, trained, and implemented.